Share

This report was prepared by Sian Loveless, NERC-Funded CSaP Policy Intern (February 2012 - May 2012)

Overly intelligent computers and robots could cause an ecological catastrophe marking the end of human existence. Not the plot of a science fiction film, but the warning delivered by Jaan Tallinn about the dangers of unmitigated technological advances in Artificial Intelligence, in the first CSaP Distinguished Lecture of 2012. This threat would result from the emergence of Artificial General Intelligence, or AGI, in which machines have self-awareness. The probability of this occurring is unknown, but Tallinn argues that it is above zero, therefore worthy of attention.

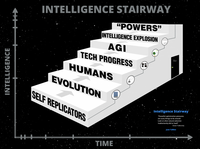

Tallinn introduced a model of the “Intelligence Stairway”, which describes the progression of the agents that control the Earth’s future as each is superseded by another agent of their own creation.

The first agents to control the Earth’s future were self-replicating genetic material, upon which evolution acted. We, Homo sapiens, are the third step, resulting from evolution. Tallinn suggests that the Earth is currently approaching the next step, where human-driven technological progression replaces evolution as the dominant future-shaping force. Just ahead of this, Tallinn foresees the emergence of AGI, an intelligence explosion, and the loss of our control on the Earth’s future. This would be possible as soon as a machine is able to take control of its own destiny. Once there, ecological catastrophe is almost inevitable.

The first agents to control the Earth’s future were self-replicating genetic material, upon which evolution acted. We, Homo sapiens, are the third step, resulting from evolution. Tallinn suggests that the Earth is currently approaching the next step, where human-driven technological progression replaces evolution as the dominant future-shaping force. Just ahead of this, Tallinn foresees the emergence of AGI, an intelligence explosion, and the loss of our control on the Earth’s future. This would be possible as soon as a machine is able to take control of its own destiny. Once there, ecological catastrophe is almost inevitable.

Is a future controlled by AGI inevitable? Tallinn believes that if we’re smart, it might not be.

Tallinn suggests that AGI is most likely to emerge inadvertently from a bug in an AI program code. Currently, an estimated 300 people worldwide may be able to create AGI, but the risk is increased by recent trends for sudden advances in technology that are entirely unregulated. By increasing awareness of the threat of an AGI controlled future, Tallinn hopes to kick-start a move towards a policy that would regulate against such accidents – ensuring we stay in control of our own destiny. A strong case was presented for serious consideration of the existential risks from AGI in policy.

An inevitably lively Q&A session followed the lecture, with questions ranging from the philosophical through to the practical, scientific and political – demonstrating the far-reaching interest in this topic.

Banner image from Andrew E. Larsen

-

1 February 2012, 5:30pm

CSaP Distinguished Lecture: The intelligence stairway

The first CSaP Distinguished Lecture in 2012 will be given by Jaan Tallinn, a co-founder of Skype, who will present a model for thinking about evolution, innovation, artificial intelligence and the future of humanity.